- Epilepsy is a neurological disorder while seizure is a characteristic symptom of epilepsy. Jerks are one kind of seizures.

- In 1981, ILAE made a seizure classification that is widely used until today

- Focal Seizures: seizure limited in or originated from one hemisphere of the brain

- simple partial seizures, (simple: no interruption on consciousness)

- complex partial seizures, (complex: with interruption on consciousness) and

- partial seizure evolving into secondary generalized seizures, about 1/3 of partial seizures

- (Primary) Generalized Seizures: seizure all over the brain

- absence seizures (kinda like blackout), formerly called petit mal

- tonic-clonic seizures (kinda like whole-body jerk), formerly called grand mal

- many other generalized seizures

- Unclassified epileptic seizures

- Focal Seizures: seizure limited in or originated from one hemisphere of the brain

- In 1989, ILAE made an epilepsy classification that is widely used until today

- Focal Epilepsies

- sympotomatic or cryptogenic (cause unknown): e.g., temporal lobe epilepsy (TLE)

- idopathic (genetic causes): e.g., benign childhood epilepsy

- Generalized Epilepsies

- sympotomatic or cryptogenic (cause unknown): e.g., West syndrome, Lennox-Gastaut syndrom

- idiopathic: e.g., childhood absence epilepsy, juvenile absence epilepsy,

- Epilepsies undertermined whether focal or generalized

- Focal Epilepsies

- When seizure is onset, we say the subject is in ictal state. Otherwise, interictal state. Because seizure duration (few seconds/minutes) is much shorter compared with interictal period, interictal EEG is more accessible.

- EEG is a gold standard for epilepsy diagnosis. However, neurologists heavily rely on either ictal EEG or interictal epileptiform discharges (IEDs, such as sharp wave and spikes) in diagnosis. The IEDs are the distinctive EEG patterns for epilepsy when subjects are not in seizure. Because seizure and IEDs happen unpredictably and sporadically, the way to catch them is to use long-term EEG recordings which can last from hours to days. Such tedious procedure is costly and inconvenient.

- Typical signal processing and machine learning problems related to epileptic EEG

- coarse epilepsy diagnosis: distinguishing epileptic interictal EEG (with or without IEDs) and non-epileptic EEG

- fine epilepsy/seizure diagnosis: identifying the type of seizure and/or epilepsy based on ictal or interictal EEG

- seizure detection: detecting seizure activities from epileptic subjects' EEG

- seizure prediction: predicting the onsets of seizure activities from epileptic subjects' EEG

- focus localization: locating the epileptogenic zone (for focal seizures only)

References:

1. For complete list of seizure types and epilepsy types, check Tables 9-1 and 9-2 on Pages 121-122 of this doc from British gov: http://www.nice.org.uk/guidance/cg137/resources/cg137-epilepsy-full-guideline3 which are reprinted from 1981 and 1989 ILAE classifications.

The format, SIG, Stellate system uses is a proprietary format. Thus, we can't apply any our own algorithms onto the data sampled in their system. They provided one way to export the data into EDF (European Data Format) files. Then I can open them by another open source software,

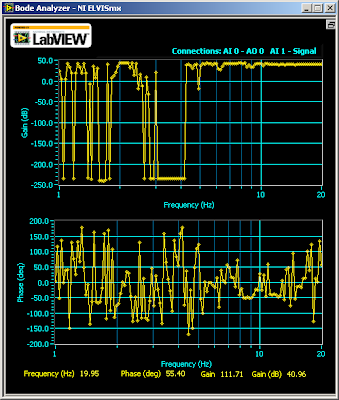

The format, SIG, Stellate system uses is a proprietary format. Thus, we can't apply any our own algorithms onto the data sampled in their system. They provided one way to export the data into EDF (European Data Format) files. Then I can open them by another open source software,  There is also a bug in the EDF file exported from Stellate system. Let's look at the EDF records of above plot. It starts like this:

There is also a bug in the EDF file exported from Stellate system. Let's look at the EDF records of above plot. It starts like this: